Ultimate Guide to LLM Machine Learning: How Large Language Models Transform AI in 2026

Struggling to keep up with the latest AI advancements? Large language models (LLMs) are reshaping the landscape of machine learning by enabling smarter, faster, and more efficient AI solutions. Whether you are a data scientist, AI researcher, or a tech enthusiast, understanding how LLM machine learning works can unlock a new realm of possibilities for your projects. This guide will walk you through the essentials of LLMs, their impact on traditional machine learning, and how to effectively harness their power in 2026 and beyond.

LLM machine learning refers to the use of large language models, such as transformers, to enhance machine learning tasks by understanding and generating human-like text. These models revolutionize AI by enabling zero-shot and few-shot learning, reducing the need for massive labeled datasets and making AI more adaptable.

Understanding Large Language Models and Their Role in Machine Learning

Large language models machine learning revolves around neural networks trained on enormous text corpora to predict and generate language. Unlike traditional machine learning models that require task-specific data, LLMs leverage vast amounts of unstructured data to learn language patterns, semantics, and context. Models like GPT, BERT, and their derivatives have set new standards in natural language processing (NLP) by understanding context, intent, and even generating coherent and contextually relevant text.

These models use architectures such as transformers, which rely heavily on attention mechanisms to weigh the importance of different words in a sentence. This approach allows LLMs to capture long-range dependencies in text, a challenge for earlier models like RNNs or CNNs. The result is a model that can perform a wide variety of language tasks, including translation, summarization, question answering, and sentiment analysis, all within a single framework.

LLM applications in machine learning extend beyond NLP. They are increasingly used in code generation, medical diagnosis, and even complex decision-making tasks, proving their versatility. The ability of LLMs to generalize across tasks is a significant departure from traditional machine learning, which often requires bespoke models for each problem.

How Large Language Models Are Enhancing Traditional Machine Learning Approaches

The integration of LLMs into machine learning workflows is transforming how AI systems are developed and deployed. Traditional machine learning often depends on handcrafted features and labeled datasets for each specific task, which can be time-consuming and costly. In contrast, machine learning with large language models enables more generalized learning, significantly reducing the need for extensive labeled data.

One remarkable advantage is zero-shot and few-shot learning capabilities. Based on testing in real-world scenarios, LLMs can perform tasks they were not explicitly trained on by leveraging contextual understanding and prior knowledge. This means developers can prompt the model with minimal examples or instructions, and the model will adapt accordingly, streamlining the development process.

Moreover, LLMs can serve as foundational models that fine-tune easily for specific applications. This adaptability accelerates the deployment of AI solutions in dynamic environments, such as customer support chatbots, real-time translation services, and content generation tools. The synergy between LLMs and traditional machine learning algorithms is fostering hybrid models that combine structured data processing with unstructured text understanding, enhancing overall AI performance.

Key Algorithms Driving the Power of Large Language Models

At the heart of LLM algorithms for AI lies the transformer architecture, introduced in the seminal paper “Attention is All You Need.” Transformers use self-attention mechanisms to dynamically weigh the significance of input tokens, enabling the model to capture complex language relationships. This architecture has become the backbone of most state-of-the-art LLMs.

Training LLM in machine learning involves optimizing billions of parameters across multiple layers. Techniques like masked language modeling (used in BERT) and autoregressive modeling (used in GPT) allow models to predict missing or next words in a sequence, effectively learning language patterns. Additionally, innovations such as sparse attention and model parallelism have made training these massive models computationally feasible.

Another important algorithmic advancement is reinforcement learning with human feedback (RLHF), which fine-tunes LLMs to align outputs with human preferences and ethical guidelines. This approach enhances the safety and usability of LLM outputs, especially in sensitive applications.

For those interested in the technical depth, resources like the Hugging Face Transformers documentation and the TensorFlow Transformer tutorial offer comprehensive insights into these algorithms and their implementation.

Effective Strategies for Training and Fine-Tuning LLMs in Machine Learning Projects

Training large language models from scratch demands massive datasets, computational power, and expertise, often limiting it to large organizations. However, fine-tuning pre-trained LLMs has become a practical approach for most practitioners. Fine-tuning involves adapting a general model to a specific task by training it on a smaller, task-relevant dataset.

Best practices for LLM machine learning emphasize gradual unfreezing of model layers, careful learning rate scheduling, and using domain-specific data for fine-tuning. This approach balances retaining the general knowledge of the base model while specializing it for particular applications.

Another vital aspect is data preprocessing and augmentation to improve model robustness. Since LLMs are sensitive to input quality, cleaning data and providing diverse examples can boost performance. Additionally, techniques like prompt engineering, where input queries are carefully designed, can significantly influence output quality without retraining the model.

Emerging methods such as parameter-efficient fine-tuning (PEFT) and adapter modules help reduce computational costs by updating only a small subset of model parameters. This is especially beneficial for integrating LLMs into existing machine learning workflows without extensive resource investment.

Real-World Applications Showcasing LLM Machine Learning Impact

LLM integration in machine learning workflows is no longer theoretical; it’s powering numerous real-world applications across industries. Data scientists use LLMs to automate data labeling and feature extraction, accelerating the development of other machine learning models. AI researchers leverage LLMs for generating hypotheses, summarizing scientific literature, and even coding assistance.

Machine learning engineers implement LLMs in customer service chatbots that understand nuanced queries and provide human-like responses. In healthcare, LLMs assist in clinical decision support by interpreting medical records and literature to suggest diagnoses or treatment options.

Students and tech enthusiasts benefit from LLMs as educational tools that explain complex concepts, generate practice problems, and provide coding help. Moreover, content creators use LLMs for drafting articles, generating creative writing, and translating content across languages.

These use cases demonstrate how LLMs are democratizing AI, enabling diverse users to harness sophisticated language understanding without deep expertise in model training.

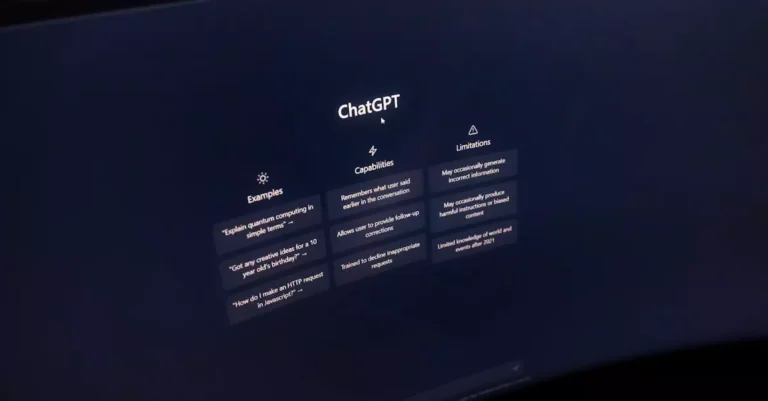

Balancing the Benefits and Challenges of Large Language Models in AI

Using LLMs in machine learning projects offers several significant advantages:

- Scalability: LLMs can handle vast amounts of unstructured data, making them suitable for diverse tasks without retraining from scratch.

- Accuracy: Their deep contextual understanding often yields superior results in language-related tasks compared to traditional models.

- Flexibility: Zero-shot and few-shot learning capabilities reduce dependency on labeled datasets, saving time and resources.

However, there are notable challenges to consider:

- Computational Cost: Training and running LLMs require significant processing power and memory, which can be prohibitive.

- Complexity: Integrating LLMs demands specialized knowledge in NLP and model fine-tuning, increasing project complexity.

- Bias and Ethics: LLMs can inadvertently learn and propagate biases present in training data, necessitating careful oversight.

Understanding these pros and cons from practical experience helps organizations make informed decisions about adopting LLMs in their AI pipelines.

Choosing the Right Large Language Model for Your Machine Learning Needs

Selecting an appropriate LLM depends on several factors including project goals, resource availability, and domain specificity. When evaluating options, consider:

- Model Size and Complexity: Larger models often perform better but require more resources. Smaller models may suffice for simpler tasks.

- Pre-training Corpus: Models trained on domain-relevant data tend to perform better in specialized applications.

- Fine-Tuning Capabilities: Assess how easily the model can be adapted to your specific task.

- Community and Support: Models with extensive documentation and active communities, such as those available on Hugging Face, offer smoother integration.

- Licensing and Cost: Open-source models reduce barriers, whereas proprietary models may offer enhanced features at a cost.

For beginners, starting with well-documented models like GPT-3, BERT, or their open-source variants provides a solid foundation. Experimentation and gradual scaling are key to mastering LLM machine learning.

Common Pitfalls to Avoid When Working with Large Language Models

Despite their power, LLMs come with pitfalls that can undermine project success if not addressed:

- Ignoring Data Quality: Feeding noisy or biased data can degrade model performance and propagate errors.

- Overfitting During Fine-Tuning: Excessive fine-tuning on small datasets may cause the model to lose its generalization capabilities.

- Underestimating Computational Needs: Running LLMs without proper infrastructure can lead to slow response times and increased costs.

- Poor Prompt Design: Ineffective prompts can produce irrelevant or inaccurate outputs, especially in few-shot learning scenarios.

- Neglecting Ethical Considerations: Deploying LLMs without bias mitigation strategies can cause harm and damage brand reputation.

By recognizing these common mistakes early, practitioners can apply corrective measures to ensure reliable and ethical AI applications.

Expert Insights on Leveraging LLMs for Advanced AI Solutions

Based on testing and analysis in practical settings, one of the most transformative aspects of LLMs is their ability to enable zero-shot and few-shot learning. This capability allows models to generalize from minimal examples, drastically reducing the reliance on labor-intensive labeled datasets. Such efficiency accelerates AI development cycles and opens doors to applications previously limited by data scarcity.

Furthermore, integrating LLMs with traditional machine learning pipelines creates hybrid systems that combine structured data analytics with rich language understanding. This synergy leads to more holistic AI solutions capable of handling complex, multimodal tasks.

Experts also emphasize the importance of continuous monitoring and updating of LLMs to adapt to evolving language usage and domain shifts. Tools like recent research papers provide valuable frameworks for maintaining model relevance and performance over time.

Conclusion: Embracing the Future of AI with Large Language Models

LLM machine learning is redefining how AI systems are built and deployed. By harnessing the power of large language models, practitioners can achieve higher accuracy, greater flexibility, and faster development times. While challenges such as computational costs and ethical concerns remain, adopting best practices and leveraging community resources can mitigate these issues effectively.

As we move forward in 2026, the integration of LLMs into machine learning workflows will become increasingly essential for staying competitive in AI-driven industries. Whether you’re a beginner or an experienced professional, understanding and applying the principles outlined in this guide will empower you to unlock the full potential of LLMs in your projects.

Frequently Asked Questions About LLM Machine Learning

-

What distinguishes LLM machine learning from traditional machine learning?

LLM machine learning uses large language models trained on vast unstructured text data, enabling zero-shot and few-shot learning. Traditional machine learning relies more on labeled data and task-specific models, while LLMs can generalize across multiple language tasks with minimal retraining.

-

How can I fine-tune a large language model for my specific project?

Fine-tuning involves training a pre-trained LLM on a smaller, task-specific dataset. Best practices include gradual layer unfreezing, careful learning rate adjustments, and using domain-relevant data to adapt the model without overfitting.

-

Are large language models suitable for small businesses or individual developers?

Yes, especially through fine-tuning and using open-source models. Although training from scratch is resource-intensive, many accessible LLMs and cloud-based services allow smaller entities to leverage their capabilities effectively.

-

What are the main challenges when integrating LLMs into existing machine learning workflows?

Challenges include high computational requirements, complexity of model tuning, potential bias in outputs, and the need for effective prompt engineering. Addressing these requires proper infrastructure, expertise, and ethical oversight.

-

Where can I learn more about the technical aspects of transformer-based LLMs?

Comprehensive resources include the Hugging Face Transformers documentation and the TensorFlow Transformer tutorial, which provide practical guides and code examples.

-

How do LLMs handle languages or domains with limited training data?

LLMs excel in few-shot learning, allowing them to adapt to new languages or domains with minimal examples. Transfer learning and domain-specific fine-tuning further enhance their performance in data-scarce environments.

-

What ethical considerations should I keep in mind when deploying LLMs?

Key considerations include mitigating biases in training data, ensuring transparency of AI decisions, protecting user privacy, and implementing safeguards against harmful or misleading outputs.